Why do we trust? It’s a feeling that develops so naturally it’s almost difficult to evaluate, but trust is logical as well as emotional, and the fact that it can render us vulnerable makes it important to analyse. It’s safe to assume that trust is something that inevitably develops between people as their relationship evolves: like invisible glue that pulls together cracks of uncertainty. However, if we are focusing specifically on feelings between people, it’s also safe to assume that a healthy level of trust will only go as far as reliance and comfort. For example, you may seek guidance from a partner or ask a colleague for help, but the advice you are given should influence rather than determine the decisions you make. After all, healthy exchanges consist of ‘why’s’ rather than mindless agreements.

On the other hand, let us consider our trust in technology. If you wanted to know the weather tomorrow, the distance between two destinations, or indeed the answer to many questions, you would probably consult your phone rather than a passer by on the street. This is because you know that access to the Internet means that these devices will have a much vaster intelligence source than a human. However, it’s important to remember that this is only logical. Any emotional level of trust would be prohibited by the restrictions of a screen. It’s safe to bet you wouldn’t tell my laptop about your day at work, or ask for it’s advice on how to deal with a colleague, because you’d perceive a difference between human empathy and technological intelligence. With current technology, our trust travels the extent to which intelligent and informative sources go, but ends where questions of best interest come in. After all, how can something know what is best for us if it can’t think like us?

It is questions such as this one that have caused technological developers to create new softwares that are re-defining the current relationship people have with technology. Voice recognition is a hot topic, and consumers are integrating devices like Siri, Alexa and Google Home into parts of their everyday lives. According to a report from Technavio, ‘the voice recognition market will be a $601 million industry by 2019’, and ComScore predicts that by 2020, ’50 percent of all searches will be voice searches.’ From a promotional standpoint, the idea behind these devices is that they can give you a quicker, easier and more seamless experience. But how are they changing the way in which people perceive technology? Consider Amazon’s virtual assistant ‘Alexa.’ Would you describe her as a friendly piece of technology, or what about a super intelligent friend? Just consider the automatic way that we say ‘her’ rather than ‘it.’ It might seem a long way off robots roaming the streets, but it is these small characteristics that make something appear ten times more human.

In consideration of this, it is unsurprising that brands are hungrily turning to voice platforms in order to reach their customers. Whiskey brand Johnnie Walker has collaborated with Alexa ‘to guide participants through tastings, recommended blends and practical tips.’ Spirits brand Patron Tequila is similarly using Alexa ‘to teach consumers about tequila facts and cocktail recipes.’ From a brand perspective this is ideal, as tone of voice can be programmed to sound much closer to the intended brand voice than a sales representative ever could. For brands like Johnny Walker and Patron Tequila, it’s like hiring the perfect barman. More importantly, from a consumer perspective, these brands suddenly have the benefit of appearing more human, which suddenly makes them easier to trust.

So what does this mean for brands in the long run? We could even go so far as to say that the consumer’s ability to talk with a brand could be leading us into a cognitive era or advertising rather than a digital one. Indeed, the idea behind advertising is predicated on the core belief that getting someone to pay attention to something else is valuable, but with brands using voice to connect with consumers more and more, the value will more likely be in getting consumers to converse with brands. An example of this is IBM’s new ‘Watson Ads’, which ‘allow customers to have one-to-one dialogues with brands in real time and ask for extra information.’ If this is the case, then what a brand deems to be important will correspondingly change. Currently, brands are semantically and syntactically concerned about their messages. When you listen to an advert you can be sure a great deal of planning would have gone into what is being said, ensuring that the message is as effective as possible. However, the ability to talk with consumers rather than at consumers means that brands will need to focus a lot more on how something is being said. When UI becomes a matter of designing friendships, and software programming could later blossom into intimate relationships, the sentiment behind a message might well become the most crucial part of it. An example of this would be a consumer being able to relate to a brand’s message through listening to it’s tone, or whether it’s voice goes up or down at the end of a sentence.

When we consider this transition into a new realm of communication, we have to ask ourselves what companies and brands are doing to achieve a new realm of understanding, and where this could possibly lead us? When devices like Alexa are awake, they are listening to everything we say. Considering that humans can speak 150 words per minute, vs. type 40 words per minute, it doesn’t take a mathematician to figure out they are taking away a lot of data. An alternative way to think about this is that these voice-first devices are learning nearly four times as much about us in the space of a minute than any other device could. And that’s not even considering all the other times they’re listening and you’re not paying attention… what about those arguments with your partner you didn’t want anyone else to hear, or those late night discussions with your housemate about a stressful day at work. At ‘Your Favourite Story’, we have Alexa switched on for the majority of the week, meaning she has all of our conversations stored, which say something about the way the way we interact, share and behave at work.

The more prominent question this leads to is what is happening with all this data? According to research, ‘Amazon teaches Alexa about dialect and intonation by feeding her data that it gets from voice inquiries.’ Google segments the audio it receives, and mixes this up in order to educate its AI new dialogue patterns. What is more, demand for AI talent is skyrocketing, having more than doubled in the last three years, and interestingly the most in demand segment is within machine-learning. When American journalist George Anders asked Alexa’s head scientist why 215 slots had recently opened up for machine learning experts, he laughed and said ‘it’s a complex task’, but is there more to it than that? Consider all the tech giants who are currently using voice command devices: these are all global brands whose purpose is built off knowing as much about us as they can; Google needs to know about the way we use the internet, Amazon needs to know about what we buy online, and Microsoft needs to know how we use software. Moreover, brands compete through trying to understand consumers more than other brands, so it’s always within their interest to learn more about the way we behave and the way we think.

Imagine the scope for a brand to influence our decisions and shopping habits if it could understand what we were feeling from the tone, pitch and rate of our voice? It’s much more difficult to mask what you’re feeling when it comes to how you say something, rather than what you’re saying. You might well argue that you’ll just turn your device off, but the question is whether you’ll really want to?

In a recent article by the BBC, it was quoted that ‘dozens of reviewers on Amazon’s website call Alexa their "new best friend" and one describes her as “part of the family,”’ In June 2017 Darren Austin, director of product management at Microsoft, wrote a blog titled ‘How Amazon’s Alexa hooks you’ in which he theorises that ‘an inevitable dependence on Alexa comes from the way that her responses are like reward systems, in that they can make you feel more relaxed.’ What is more, a trending request for Alexa now seems to be the phrase ‘help me relax’, in response to which Alexa begins to play smoothing sounds. As Austin suggests, it is responses such as these that will hook people, but this will ultimately be much stronger than the urge to flick through an Instagram feed. Human characteristics lead to human emotions: when we desire, envy or become fascinated by these devices will we really want to turn them off? It leaves us in some kind of inescapable smart loop - the more this technology learns about us, the more it knows what to say, which in turn makes us need it more.

In reflection of all of this, it seems like we may be passing through different phases, in which our relationship with technology intensifies. So far, technology has predominantly existed as a secondary entity to humans: when people choose to ask a question they turn their phone or computer on. With devices like voice recognition our relationship with technology has changed by means of communication: we are beginning to talk with technology rather than at it. Considering the amount of data which is being gathered, the next question we need to ask is what will happen when this technology knows us better than other people know us, or even better than we know ourselves? What happens when technology is no longer a secondary entity, but part of our own being? Perhaps we are already seeing the first examples of this. MIT have recently created a headset called AlterEgo, which allows you to communicate with a computer system without ever having to open your mouth. Researchers in South Korea have made a nanomembrane out of silver nanowires that serve as microphones. These nanomembranes can be attached to your skin and play entire concertos.

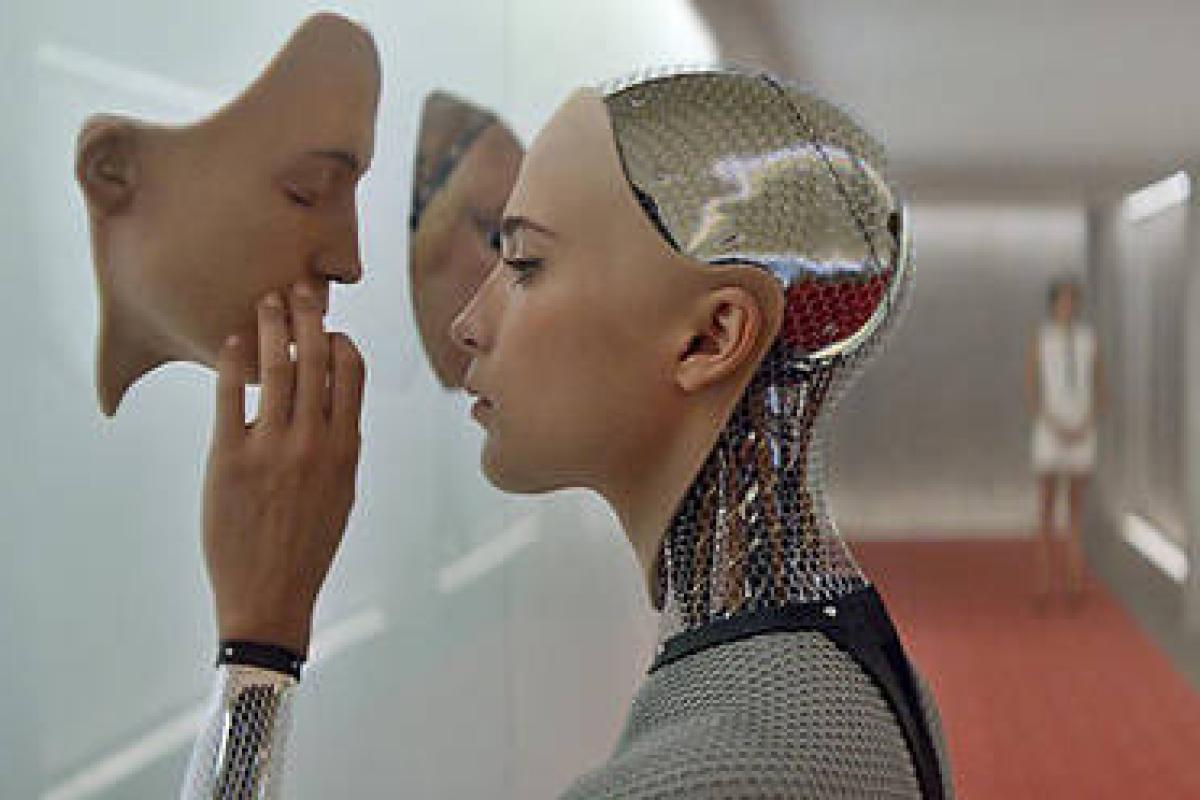

So what does the arrival of these new technologies indicate? It isn’t to say that giant robots will sprout out of the ground to declare war of us – it’s only in Hollywood that this kind of thing tends to happen. What it does suggest, however, is that technology might become so adept at understanding people, that it’s psychological competence begins to outweigh our own. In 1974, Thomas Nagel wrote a philosophical review on consciousness titled ‘What is it like to be a bat?’, in which he essentially argues that any species can only understand it’s own species best, because it can only understand the mental states it can itself experience. This, however, is an evolutionary understanding of self. Whilst understanding of other species cannot be programmed into people, it can perhaps be programmed into AI, and these technologies have super-accurate pattern recognitions, which are only becoming more and more accurate. Ultimately, in the face of these emerging technologies, the question we must ask ourselves is whether we will be able to determine between what is real and what is fake? Whilst intelligence might be called artificial, does this mean that it cannot be human?

This piece was by Georgia Pounsford of Your Favourite Story.